- Tesla's official account is actively promoting stories of visually impaired and elderly drivers relying on Full Self-Driving mode as their primary means of transportation, and the backlash is erupting for good reason.

- A 71-year-old man with deteriorating eyesight says he "never touched a wheel" during a test drive and was sold, while a 93-year-old woman's family celebrates that she can now drive alone — all being amplified by Tesla as heartwarming marketing rather than alarming liability.

- The disconnect between Musk publicly claiming "no human input at all" and Tesla's own fine print requiring "active supervision" isn't just misleading anymore — it's putting the most vulnerable road users and everyone around them in genuine danger.

It’s all fun and games to hype up Tesla’s Full Self-Driving mode on X, but what happens when real lives are at risk over false claims?

Full Self-Driving mode has been under heavy scrutiny lately. The name suggests the vehicle drives itself, but the mode isn’t full self-driving at all. Even Tesla’s own fine print for the mode states that it requires your “active supervision.” California ruled the name misleading and required Tesla to change its name to “Autopilot.” Meanwhile, Elon Musk has been proudly proclaiming that FSD doesn’t require drivers to look at all, even posting staged videos of himself using the mode with the caption: “Tesla drives itself (no human input at all) through urban streets to highway to streets, then finds a parking spot.”

I’m not sure why Musk is allowed to make these kinds of claims without any legal intervention, especially when he has an army of fans that believe everything he says. They even believed that the nearly 7,000-pound vehicle could “serve briefly as a boat” in water. It sounds ridiculous, but it didn’t stop Cybertruck owners from attempting to cross bodies of water (and fail). I guess if his followers are not willing to sue when they end up sinking their trucks, Musk won’t face any legal issues for his false promises.

But now others’ lives may be at stake, causing a massive backlash on social media.

Tesla shares story of man with bad vision buying a Cybertruck for FSD mode

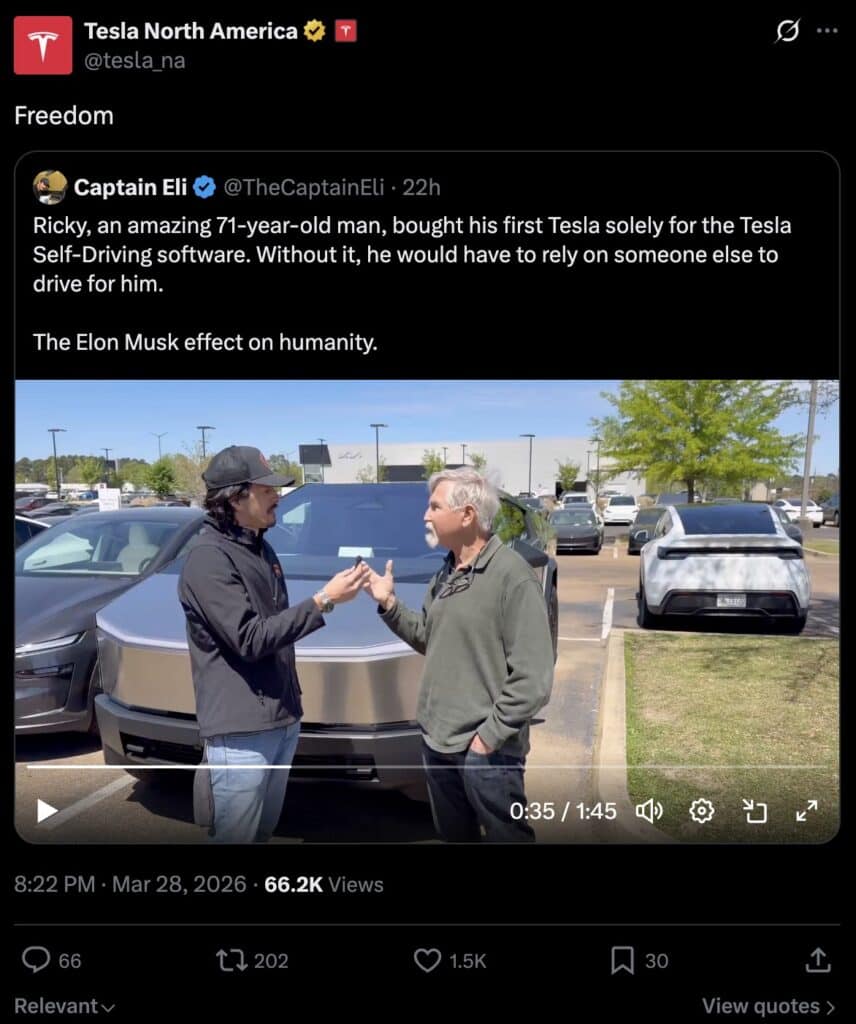

The official Tesla North America account has been caught sharing multiple tweets on X that involve people with various driving handicaps relying on Tesla’s Full Self-Driving software. The first was a very fanboy-esque story about a 71-year-old who bought a Tesla for the FSD, claiming he’d “have to rely on someone else to drive for him” if he didn’t have it. This was called the “Elon Musk effect on humanity.”

“My eyes are getting bad,” the old man said. He then stated that his eye doctor offered him a “test drive” in his Tesla. “I never touched a wheel. That’s all I needed. I was sold.”

Tesla retweeted this video, replying “Freedom.”

The second story was a first-hand account of someone using FSD after they fractured their elbow. The guy claimed he used the mode to avoid an ambulance ride. Tesla shared with a heart.

Tesla then reposted a corny video of a 93-year-old using Full Self-Driving mode to get to church. The older woman’s son claims: “Although she has always been a good driver, my mom can now drive without the fear or fatigue that can naturally come with age. No more relying on others for every trip. No more feeling stuck. This is true mobility that can spark new adventures in a still adventurous woman!”

It sounds like some feel-good stories at first, especially when you see Tesla drivers applauding the heartwarming tales of FSD helping old, blind people get around on their own. But many people started to catch wind of Tesla North America sharing these stories, causing major backlash.

Wrote one person on X: “Absolute madness!!!! I’ll wager everything I have that this Tesla tweet will end up as evidence in a court case about a Tesla FSD crash at some point. A plaintiff’s attorney will hold it up and say: ‘Tesla’s own official account promoted a testimonial from a vision-impaired buyer who purchased the vehicle specifically because he believed FSD could drive for him — and Tesla amplified that message to millions.’ This is reaching genuinely dangerous levels.”

While Tesla’s social media account appears to promote its Full Self-Driving system as a truly autonomous driving experience, its website states that it’s just a Level 2 autonomous system that still requires full driver attention. Not only that, but it’s currently being investigated by the National Highway Traffic Safety Administration (NHTSA) over its inability to function properly under certain (common) conditions like fog. The contradictory messages and capability claims are starting to alarm many drivers. How would someone who is going blind or too old to drive go about properly — and quickly — taking over when FSD fails? Which it very often does, crashing three to four times more than a human driver. According to its own math.

In fact, around this same time, another FSD video has been going viral on X: one of a guy claiming FSD got him through a very dangerous scenario, handling it “perfectly.” However, the video shows the man going well over the speed limit to dangerously overtake a truck, rather than just pulling over as a safe driver would in that buttcheek-clenching scenario. I can only imagine how that situation would go if the driver were a 90-year-old woman too tired to make any fast reactions. Or a dude going blind.

Said another concerned X user: “No big deal, just Tesla endorsing people using FSD when they explicitly are not able to properly supervise/take over. Surely won’t have liability implications down the line.”

Tesla hasn’t made any explicit statements about endorsing impaired drivers who rely on Full Self-Driving mode, but it appears the carmaker is trying to convey that FSD offers freedom to people who would otherwise be unfit to be behind the wheel. And while Tesla never claimed drivers should use FSD without supervision, it’s still quite questionable to imply that drivers who can’t really respond to dangerous situations should drive with FSD. Or drive at all. But again, this is what happens when Tesla keeps going unchecked for its crazy claims, even as vehicles drown and crash as a result.